Growth

How AI and LLMs Help You Find and Rank the Right Targets

Learn how AI and LLMs scrape, analyse and rank thousands of potential targets on fit — faster and more precisely than any manual process.

Apr 16, 2025

7 min.

Joep van Acht

Here's a number most B2B teams would rather not hear: contact data decays at roughly 2.1% per month. That compounds to nearly a quarter of your database going stale every year. And yet, most target lists are still built the same way: someone spends a day on LinkedIn Sales Navigator, exports a CSV, cleans it in a spreadsheet, and calls it done. Two weeks later, people have changed roles, companies have pivoted, and nobody can explain why Company A was ranked above Company B.

We build targeting systems for founders, VC teams, and recruitment professionals, and we see this pattern constantly. The list itself isn't the problem. It's the assumption that a static list can survive contact with reality.

AI and specifically large language models changes this fundamentally. Instead of browsing and guessing, you define your ideal customer criteria once, and an AI engine continuously scans, scores, and ranks your entire addressable market against those criteria. The result isn't a spreadsheet. It's a living database of targets, ranked by fit, enriched with verified data, and refreshed automatically.

Why Manual Target Lists Break Down

Manual prospecting has three structural weaknesses, and they compound.

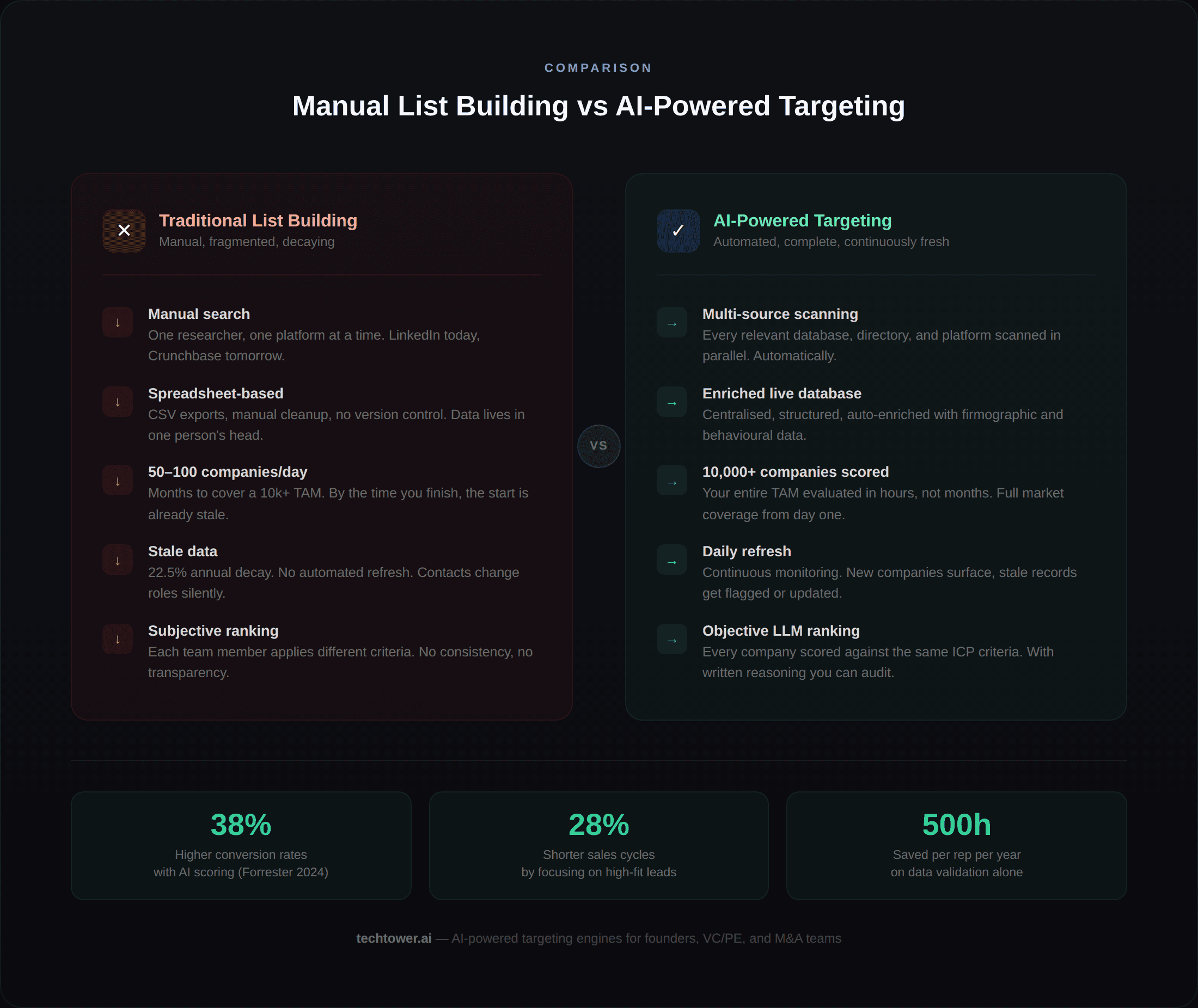

First, coverage is limited. Even a dedicated researcher can realistically evaluate 50–100 companies per day. If your total addressable market is 10,000+ companies, that's months of work before you've even looked at everyone once. Meanwhile, the 38% of companies that are undergoing major changes each year aren't waiting for your researcher to get to them.

Second, consistency is poor. Different team members apply different criteria. What one person considers a "strong fit" another might skip entirely. Without a structured scoring framework, your target list reflects individual biases rather than strategic priorities. This is not a minor issue — Forrester's 2024 research found that companies using structured AI scoring saw 38% higher conversion rates specifically because the scoring was consistent across every record.

Third, your data is rotting while you read this. B2B contact information degrades at 2.1% per month. Within a year, nearly a quarter of your database is dead weight: wrong emails, old job titles, people who left six months ago. A study of 1,200 business cards showed that 70.8% had at least one outdated field after just twelve months. The cost isn't abstract: the average sales rep burns 500 hours a year just checking and fixing contact details. That's a quarter of their selling time spent on maintenance, not revenue.

How AI Scores and Ranks 10,000+ Companies Against Your ICP

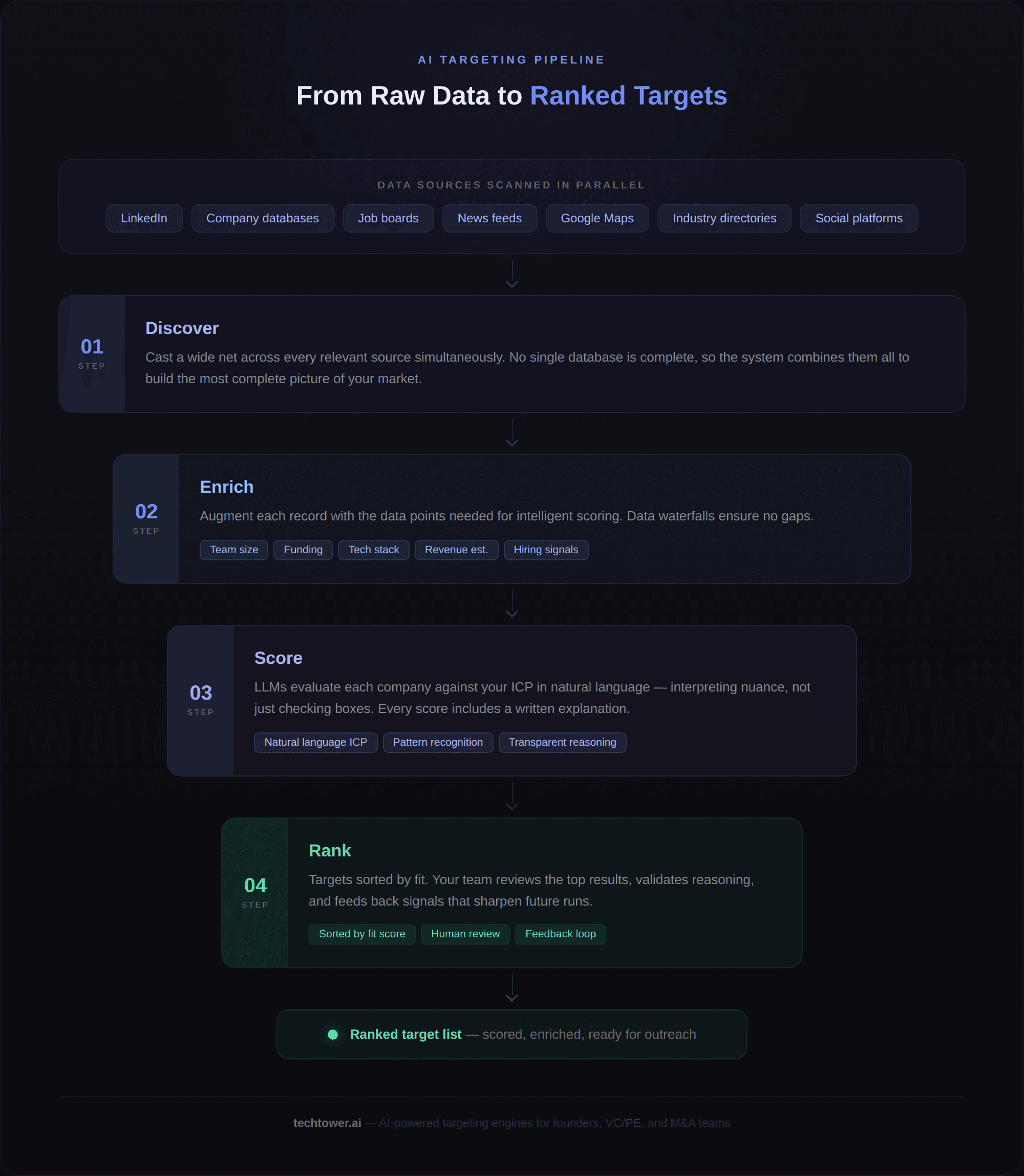

A modern AI targeting system operates in four stages: discover, enrich, score, and rank. Each stage solves a specific failure mode of manual prospecting.

Discover

The system casts a wide net across multiple data sources; company databases, industry directories, job boards, news feeds, social platforms, and even niche sources like Google Maps listings or sector-specific platforms. Rather than relying on a single database (which is always incomplete), it combines sources to build the most complete picture of your market. Think of it as running a parallel search across every channel your researcher would check, simultaneously.

Enrich

Raw company data is rarely enough to score on. The system enriches each record with additional data points: team size, recent funding, technology stack, hiring patterns, social activity, revenue estimates, and more. This enrichment happens automatically through data waterfalls: if one source doesn't have the information, the next one in the chain provides it. The goal is to have enough context per company that the scoring step can make an informed judgement, not just check a box.

Score

This is where LLMs make the biggest difference, and where most people misunderstand the technology. Traditional lead scoring uses rigid rules: "if headcount > 50 AND sector = SaaS, score = 7." That works when your criteria are simple and binary. But most real-world ICP definitions aren't.

Try encoding this into IF/THEN rules: "We want mid-market SaaS companies with a product-led growth motion and a technical founding team, ideally in the productivity or developer tooling space, that have shown signs of 2x revenue growth in the last 18 months." Good luck. An LLM parses that description naturally. You feed it your ideal customer profile in plain language including qualitative factors like "companies showing signs of rapid growth" or "teams with a technical founder who previously worked at a scale-up" and the model evaluates each company against that description.

The model doesn't just check boxes. It reads company descriptions, analyses hiring patterns, interprets recent activity, and generates a relevance score with a brief explanation of why each company scored the way it did. That reasoning is visible to your team, making the scoring transparent rather than a black box.

A word of realism: LLMs are not infallible. They occasionally hallucinate or misjudge context, especially with very thin company profiles. That's why the human review step matters. The AI handles the heavy lifting of scoring thousands of companies; your team handles the judgement calls on the top 50–100.

Rank

What comes out is a prioritised list your team can actually work with sorted by fit, with a written explanation behind every score. No black-box rankings. Your team reviews the top results, flags what's right and what's off, and that feedback feeds directly back into the model. The scoring improves with every cycle. This is where AI and human judgement reinforce each other: the system handles volume, your team handles nuance.

From Companies to People: Finding the Right Contact

Identifying the right company is only half the job. You also need the right person within that company.

Once a company scores above your threshold, the system identifies relevant individuals based on the job titles and seniority levels you specify. It finds up to 2–3 contacts per company, enriches their profiles with verified email addresses (using multi-source validation to ensure deliverability), and adds them to your outreach pipeline.

This people-level targeting matters more than most teams appreciate. Reaching the CEO when you should be talking to the VP Operations wastes everyone's time. But reaching the right person with a stale email address is arguably worse: you've done the targeting work correctly and still get nothing back. Multi-source email verification exists precisely for this reason.

Why LLMs Outperform Rule-Based Scoring

LLMs handle ambiguity in ways that rule-based systems simply cannot. They catch patterns that rigid filters miss: a company website that describes "recurring licence fees" without ever using the word "SaaS." A founder with a PhD in machine learning and two open-source repos on GitHub, but whose title just says "CEO." A 15-person company that posted five engineering roles in the same week; a hiring burst that signals funding before it's announced. Or a firm that just moved from a co-working space to its own office, a growth indicator that lives in a Google Maps update, not in a CRM field.

The practical impact is fewer false positives (companies that look good on paper but aren't) and fewer false negatives (companies that are a great fit but wouldn't pass a rigid filter). Deloitte's 2024 research found that companies using AI for lead scoring and targeting experienced a 20–30% rise in conversion rates. Forrester reports 28% shorter sales cycles through focusing on high-quality leads. These numbers aren't theoretical, they're what happens when you stop filtering on surface-level data and start evaluating on actual fit.

Getting Started: What You Need

Building an AI-powered targeting system requires three inputs from your side. None of them are technical.

First, a clear ICP definition. The more specific you are about what makes a company and contact a good fit, the better the system performs. This isn't a vague exercise. It typically starts with a structured questionnaire covering your ideal customer's firmographics, behavioural signals, and the qualitative factors that matter to your team. The difference between "we sell to mid-market SaaS" and a genuinely useful ICP is usually about 30 minutes of focused conversation.

Second, agreement on which sources to scan. Depending on your market, this might include standard B2B databases, LinkedIn, industry directories, or more creative sources. A B2B SaaS company and a professional services firm targeting the manufacturing sector need very different source configurations.

Third, feedback. The system improves through your team's input. Reviewing the first batch of scored targets and providing clear yes/no/maybe signals calibrates the model rapidly. Most systems show meaningful improvement after a single feedback round.

From there, the system runs autonomously: refreshing data, rescoring companies as their circumstances change, and feeding new high-fit targets into your pipeline on a continuous basis.

Ready to Map and Rank Your Entire Target Market?

TechTower builds AI-powered targeting engines that scrape, enrich, score, and rank your full addressable market. You get a clean, prioritised pipeline of companies and contacts — updated automatically, scored against your specific criteria.

→ Get a map of all your targets — start with a free target mapping session to see what your market looks like.

→ See our pricing to understand what's included.